Vision Charting Agent

Every wound gets photographed. Every wound assessment still gets typed by hand — in a separate app, from memory. Designing the AI agent that turns the photograph into the completed chart entry.

Context: Wound assessment requires nurses to document measurements, appearance, and progression across 30+ fields — spanning 3 separate applications today. Nurses already photograph wounds as part of care, but those images generate nothing. The photograph gets stored. Every measurement is still done manually, every field still gets typed by hand, from memory, after the fact.

At a Glance

Showcased at Oracle Health Leadership Summit 2025

Upcoming controlled eval

Feature-flagged release at BayCare Health System

In Progress

~8-15 min

Estimated Time Saved by Vision Charting Agent

The Problem

A single wound assessment takes 15+ minutes per wound — longer when patients have multiple wounds. The process spans image capture in one tool, clinical charting in another, and progression notes written manually from memory. Vision Charting Agent changes this: photograph the wound, AI analyzes the image and suggests clinical values, nurse reviews and signs, and wound progression summary is automatically generated.

My Role

Cross-functional: PM, engineering, data science

Research

6 research streams:

National Wound Care Strategy Program - training materials from nursing director covering wound capture best practices, consent, patient dignity, and identifiable information handling

Internal nursing working group - bi-weekly with Oracle nursing consultants

External nursing working group - bi-weekly with nurses at US & UK client locations, joint effort with EHRM Desktop designer

Gold data collection - remote sessions led by data scientists

In-person shadowing - PM led, team dialed in remotely for wrap-up

Oracle Health Summit 2025 Demo - Sep 2025, learnings from the proof of concept presented by our Director of Nursing team

What We Learned

15+ minutes per wound is the current documentation burden - longer for wound care nurses managing multiple wounds per shift

Wound care spans 3 apps today - nurses switch constantly between 3 applications for image capture, charting, and notes

BSNs vs. wound care nurses have different needs - BSNs chart fewer wound DTAs; wound care nurses manage extensive assessments and progression notes

Consent and image handling are established practice - nurses already photograph wounds today; training exists, clinical image protocols exist

The Solution

Vision Charting Agent introduces a special capability that activates within the Discrete Charting Agent's workflow when a nurse expresses intent to chart a wound. The nurse photographs the wound, the agent returns suggested clinical values — length, width, color — marked with a subtle bloom bar indicating AI origin. The nurse reviews, accepts or edits, and signs. Editing removes the bloom bar — the value becomes nurse-entered.

Key Design Decisions

The Pivot: Standalone → Unified workflow

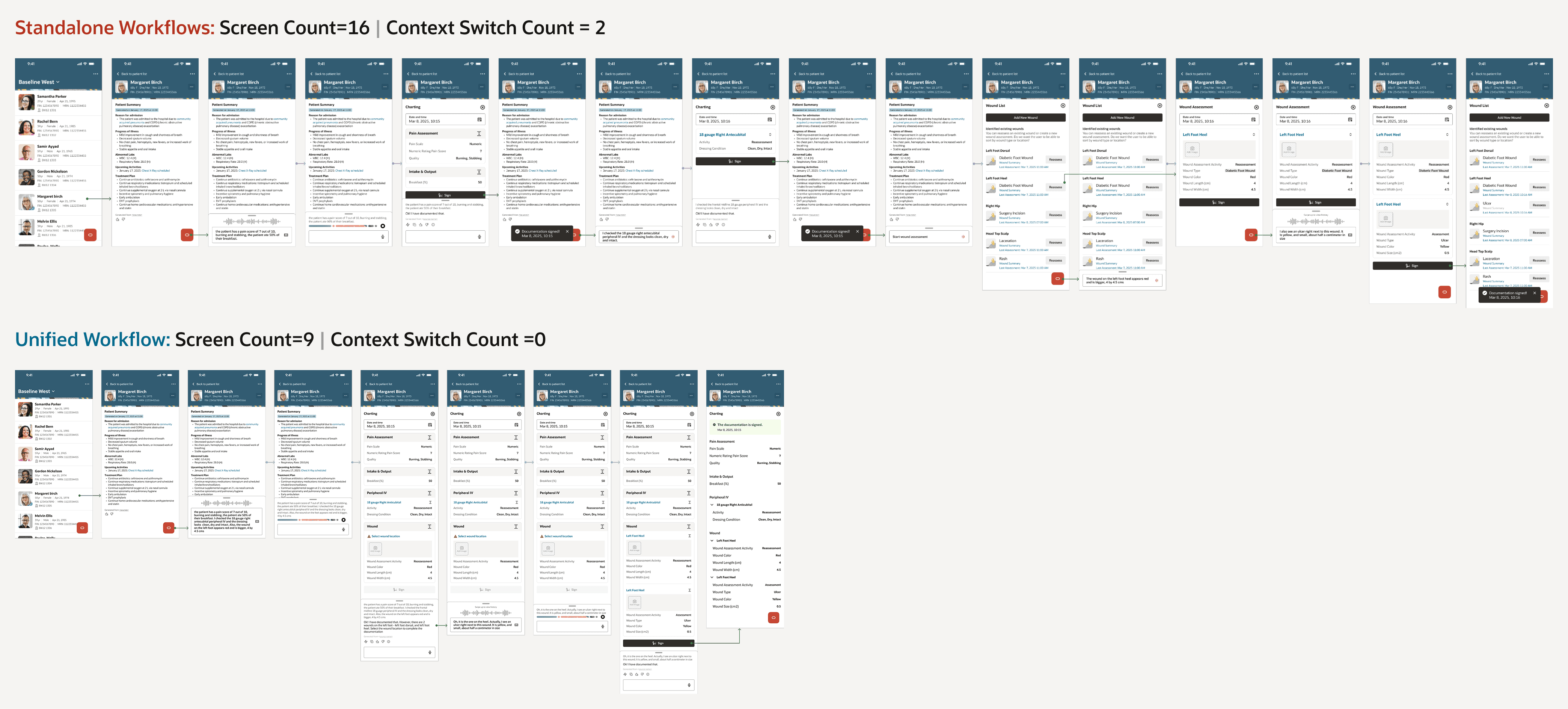

Context: In standalone workflows, a nurse would have to go into individual workflows to chart dynamic groups entities like wounds, lines, tubes, drains and IVs and a different workflow to chart non-dynamic group entities. In a unified workflow, the nurse is able to chart both dynamic group and non-dynamic group entities in one place. For ex: the screens above shows how a nurse switches from non-dynamic group → IVs → Wounds through a series of 16 screen interactions in standalone workflows, while the same charting is completed within 9 screen interactions in an unified workflow

Vision Charting started as its own workflow — its own entry point, wound list, body map, and charting experience. The design was strong. The structural problem wasn't visible yet: if we built a standalone workflow for wounds, we'd need one for every assessment type that uses dynamic groups. And if clients create their own dynamic groups — we'd have rebuilt exactly the fragmentation nurses live with today.

For a team offsite, I prepared a unified workflow design, a standalone scaling breakdown, and 25+ ambiguity scenarios. A morning-of Zoom with nurses from multiple client locations validated direction: "I think of charting as charting. I don't want to go to different places for different things." That walked into the room with us.

The agent interaction: AI suggests, nurse decides

The agent analyzes the wound image and returns suggested values — displayed with a subtle bloom bar indicating AI origin. The nurse reviews and accepts, or edits directly on the canvas. Editing removes the bloom bar — clinical ownership transfers back to the nurse without ceremony. This distinction matters: the agent suggests clinical values rather than just capturing nurse input, which is why working to get this agent classified as a medical device. The nurse is always the clinical decision-maker.

Impact

Reflections

The best design decisions sometimes mean undoing previous ones The standalone workflow wasn't wrong when we built it - it was the right answer to the information we had at that time. What changed wasn't the design. It was the frame. Seeing the scaling problem clearly required stepping back far enough to see the whole system, not just the workflow in front of us.

Bringing a complete case to the table Walking into the offsite with a unified design, a scaling breakdown, ambiguity scenarios, and morning-of nurse validation meant the team could make a confident decision in the room rather than leaving with more questions. The preparation was the design work.

What's Next

Controlled evaluation at BayCare begins once development is complete. Annotation editing, where nurse corrections feed back into model training, is planned for a future phase.

The unified pattern established here sets the foundation for every dynamic-group-based assessment in CAAM Nursing going forward.